Guide contents

Practical Guide for Enterprise Buyers

AI Document Processing Without Losing Control of Your Data

AI document processing is moving quickly. Organisations are being asked to do more with contracts, records, forms, correspondence, policies, claims, invoices, employee files, and case documents — often without adding headcount or slowing down existing operations. The promise is real: faster classification, better search, automated metadata extraction, summarisation, risk flagging, and new ways to understand large document collections.

But for many enterprises, the first question is not "Can AI read our documents?"

Can we use AI without losing control of our data, governance model, compliance obligations, and audit trail?

That concern is reasonable. Documents often contain sensitive business information, personal information, regulated records, legal obligations, financial data, employee data, customer communications, and operational history. Applying AI to that content should not mean copying it into opaque third-party systems, weakening access controls, or creating outputs that cannot be explained, reviewed, validated, or governed. This guide outlines a practical approach to AI document processing — using FormKiQ's architecture on AWS as a reference model — for organisations that want the benefits of AI while maintaining enterprise-grade control.

1. Start with the Business Problem, Not the AI Feature

AI document processing works best when it is tied to a specific operational problem. A common mistake is to begin with a broad question such as "How can we use AI on our documents?" That usually leads to unclear scope, inconsistent results, and stakeholder discomfort.

A better starting point is: Which document-heavy process is costly, slow, risky, or difficult to scale today?

Classification bottleneck

Challenge

Documents arrive from multiple channels; staff manually sort and tag each one before it enters a workflow.

AI approach

AI identifies document type at the point of ingestion and applies classification metadata automatically.

Data entry burden

Challenge

Staff manually read invoices, forms, and applications to extract key data and enter it into business systems.

AI approach

OCR and AI extraction pull structured data from documents — names, dates, amounts, identifiers — and apply it as searchable metadata.

Review volume

Challenge

Reviewers process hundreds of documents daily and cannot read each one in full before making triage decisions.

AI approach

AI summarisation produces concise summaries for triage, allowing reviewers to prioritise and focus on documents that require detailed attention.

Hidden obligations

Challenge

Contracts contain obligations, milestones, and renewal dates buried in lengthy text; staff discover them late or not at all.

AI approach

AI analyses contract text and extracts obligations, deadlines, and key terms as structured, trackable metadata.

Sensitivity exposure

Challenge

Documents containing PII, PHI, or confidential information are uploaded without appropriate access controls because nobody classified them at intake.

AI approach

AI sensitivity classification identifies documents containing sensitive content and triggers appropriate access restrictions automatically.

Governance gaps

Challenge

Large document collections have incomplete metadata, inconsistent classification, or missing retention categories.

AI approach

AI analyses existing collections and suggests classifications, identifies missing metadata, and flags governance gaps for remediation.

Once the problem is clear, AI can be evaluated as one part of a controlled process — not as an open-ended experiment.

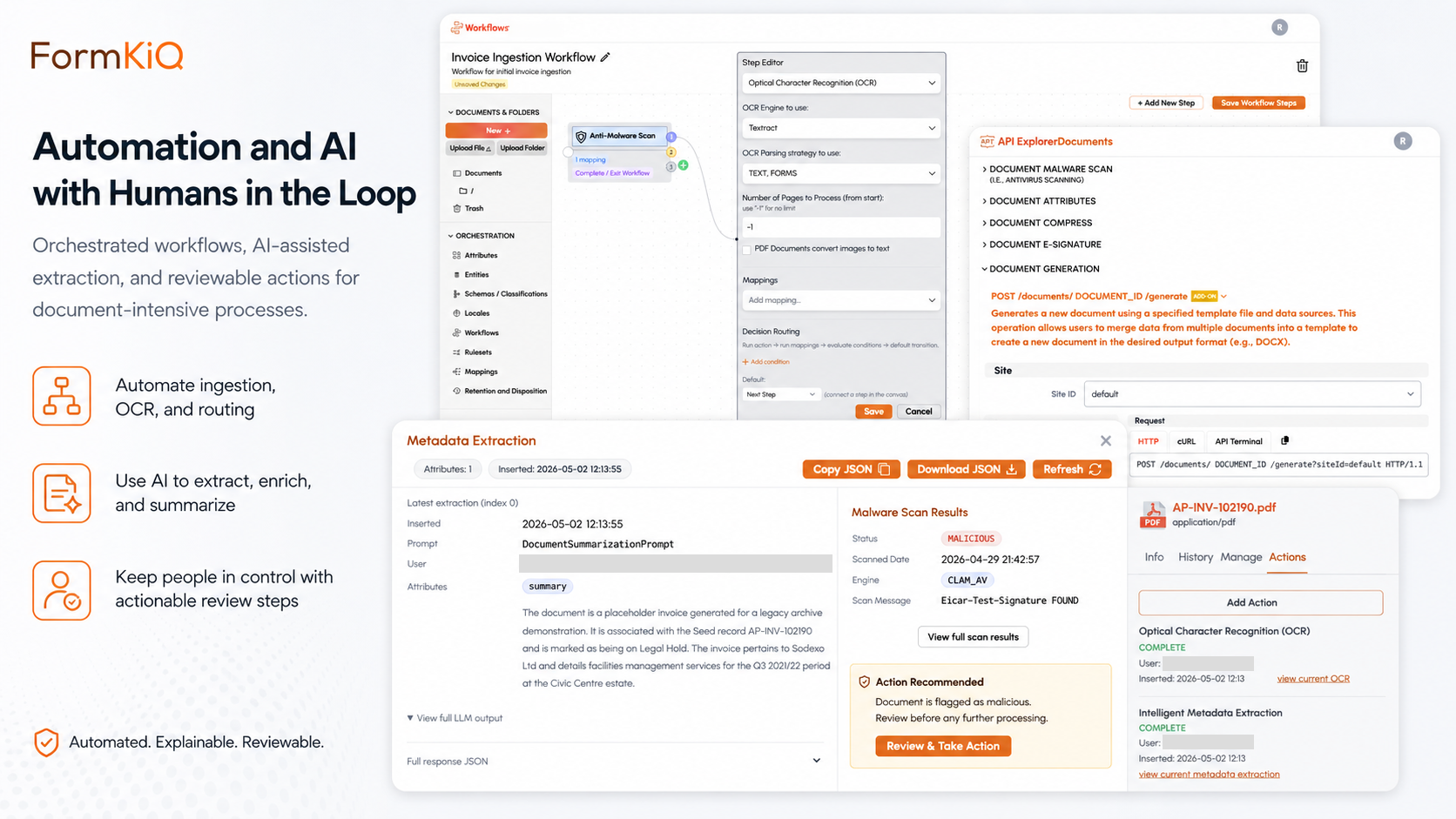

A legal operations team may not need "AI for contracts" in general. It may need to identify renewal dates, notice periods, governing law, assignment clauses, and termination rights across a specific set of supplier agreements. That narrower problem is easier to test, govern, validate, and improve. In FormKiQ, this translates to configuring the AI Processing and Analysis module — powered by Amazon Bedrock — to analyse supplier agreements and extract specific clause types as structured metadata, running within the organisation's own AWS account.

2. Know What Data the AI Will Touch

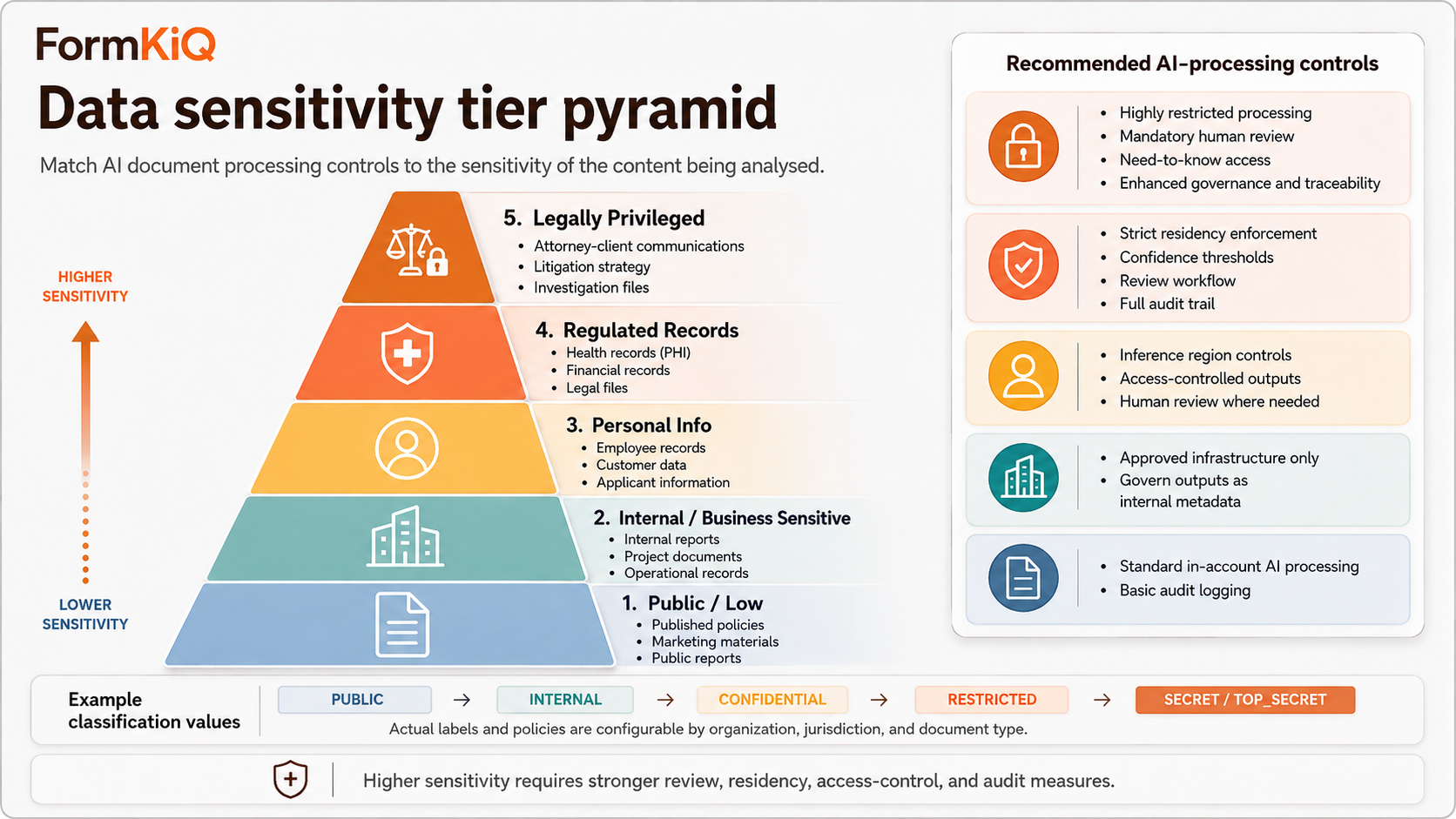

Before applying AI to documents, organisations should understand the sensitivity of the content being processed. The goal is not to avoid AI entirely. The goal is to match the AI processing model to the risk level of the data.

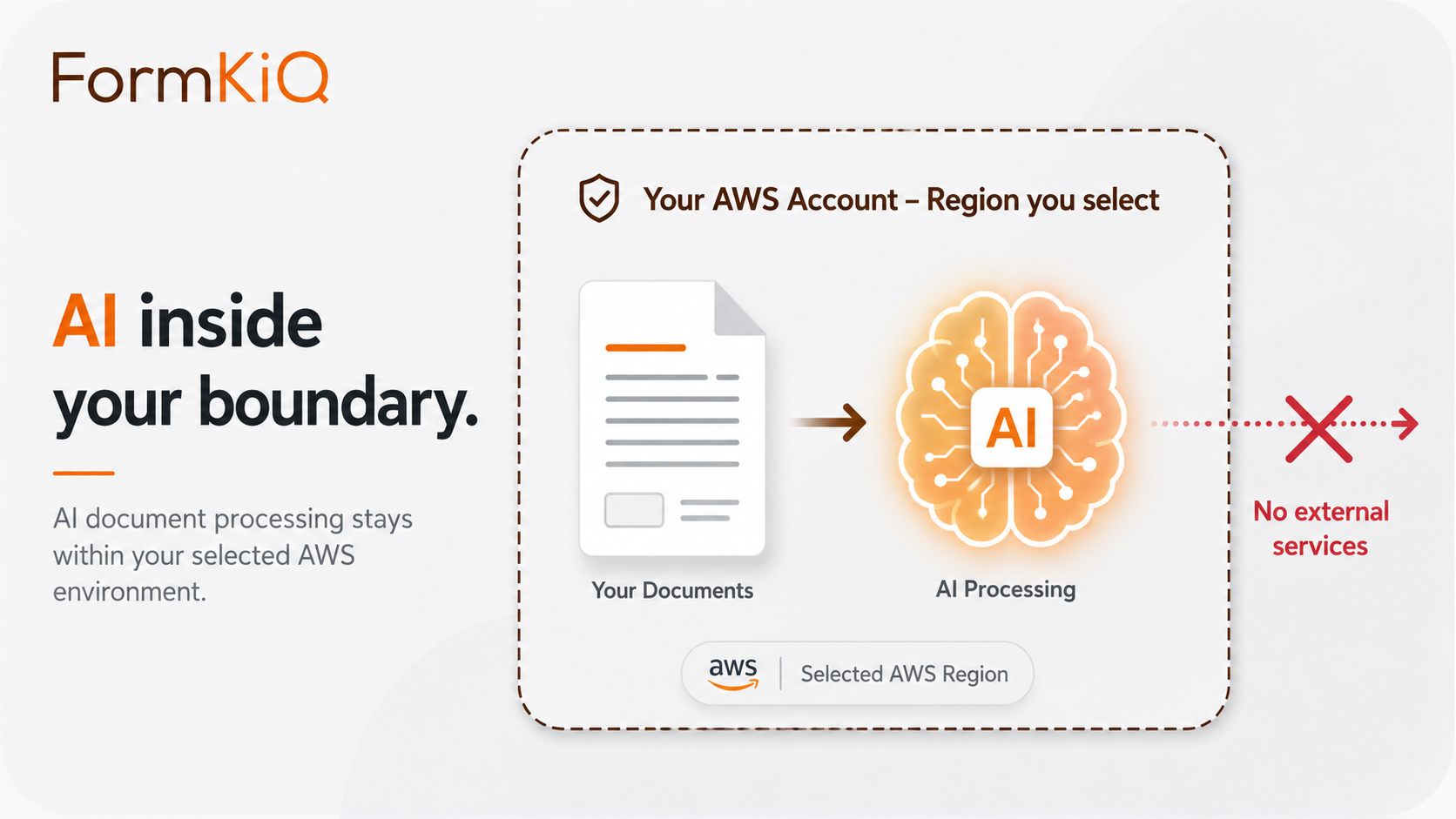

FormKiQ's AI processing through Amazon Bedrock runs entirely within the organisation's AWS account, with inference region controls that keep document content within the selected geographic boundary. The risk assessment for AI processing is the same as the risk assessment for document storage — the data doesn't move to a new environment for AI processing.

An organisation might allow AI summarisation on published policies and public board materials with relatively low risk, while applying stricter controls — including mandatory human review of all AI outputs — to HR files, legal agreements, health-related records, or customer claims. In FormKiQ, this is configured per-document-type: AI processing rules are attached to document type definitions, so different document types receive different AI treatment automatically.

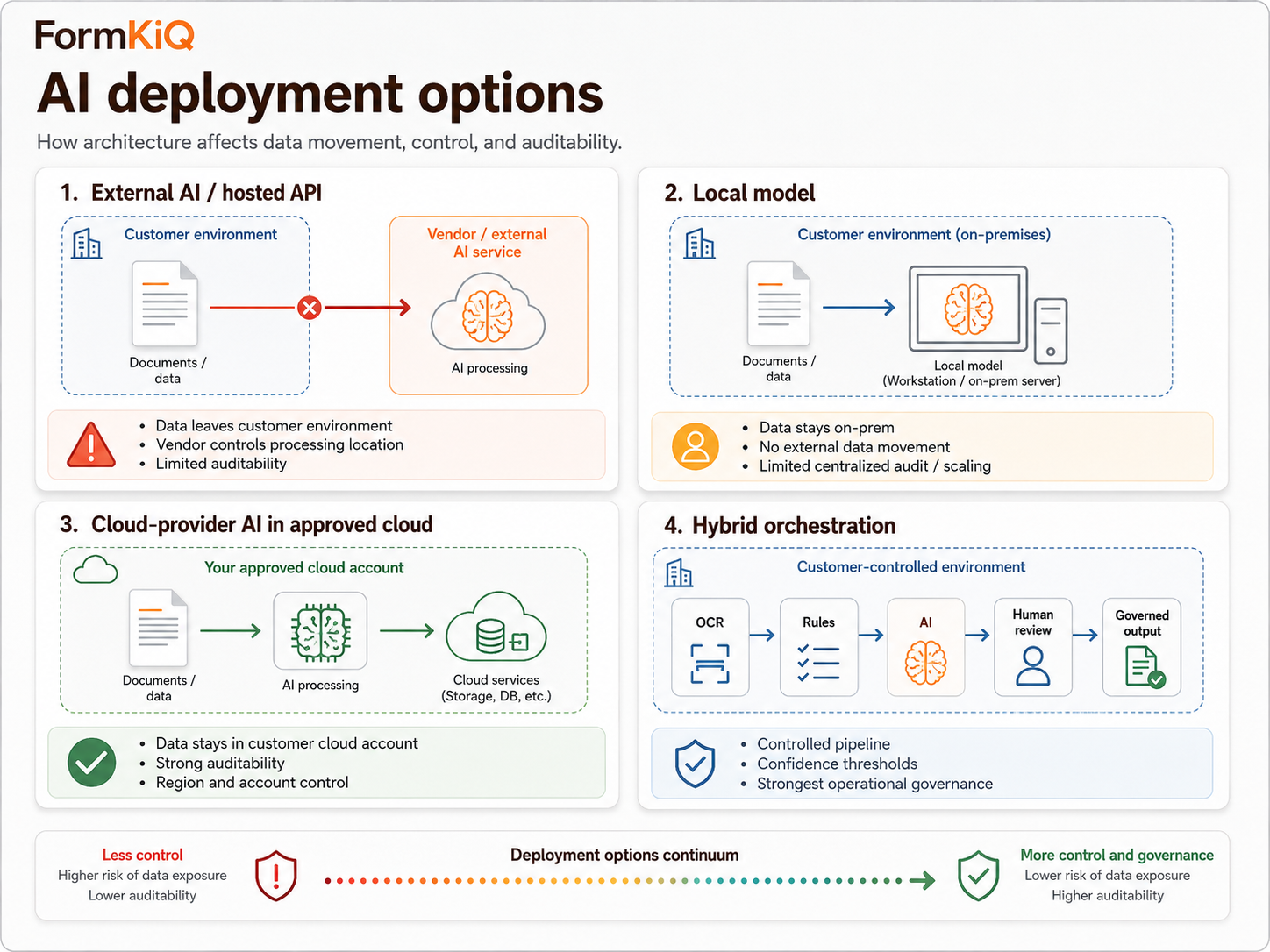

3. Understand the AI Deployment Options

Enterprise buyers do not have only one way to apply AI to documents. The architecture matters because it affects data movement, security, auditability, cost, and operational complexity.

FormKiQ uses the third and fourth options in combination. OCR (Tesseract or Amazon Textract) handles text extraction. Amazon Bedrock handles AI classification, extraction, summarisation, and analysis. Workflows, rulesets, and human review queues provide the orchestration. Everything runs within the customer's AWS account — no document content is sent to external services.

4. Keep Documents in Your Environment

For cautious buyers, one of the most important architectural questions is where document content goes during AI processing. In some AI tools, documents are uploaded to a vendor-controlled environment. That raises questions many enterprises struggle to answer satisfactorily.

Data residency

Question to ask

Where is document content processed geographically?

FormKiQ

Processing occurs in your AWS account, in the region you select, with Bedrock inference region controls specifying the processing region

Data retention by AI provider

Question to ask

Does the AI provider retain document content after processing?

FormKiQ

Amazon Bedrock does not retain customer inputs or outputs; no document content is stored outside your account

Model training

Question to ask

Is document content used to train or improve AI models?

FormKiQ

Amazon Bedrock does not use customer data for model training

Vendor access

Question to ask

Can the AI vendor's staff access document content?

FormKiQ

No FormKiQ personnel access document content during normal operation; Bedrock processes within your account without external access

Subprocessor involvement

Question to ask

Are additional third parties involved in processing?

FormKiQ

Processing occurs through AWS services within your account — no additional subprocessors for AI processing

Auditability

Question to ask

Can every AI processing event be logged and audited?

FormKiQ

CloudTrail records API calls; Bedrock invocation logs capture processing events; FormKiQ's audit trail records document-level actions — all in your account

Cross-border transfer

Question to ask

Does AI processing involve moving data across jurisdictional boundaries?

FormKiQ

Inference region controls ensure processing occurs within the same region as document storage

Incident response

Question to ask

If something goes wrong, who investigates and how?

FormKiQ

Your security team investigates in your infrastructure using your CloudTrail, CloudWatch, and FormKiQ audit logs

The preferred pattern for enterprise AI document processing is: documents stay in the customer-controlled environment. AI processing occurs within approved infrastructure. Outputs are stored, reviewed, and governed like any other system-generated metadata.

If a Canadian public-sector organisation has a requirement to store and process records in Canada, FormKiQ deploys to ca-central-1 (Montreal) or ca-west-1 (Calgary), and Bedrock inference region controls ensure AI processing occurs within the same Canadian region. The organisation doesn't need to evaluate whether an external AI service will honour its data residency requirements — the architecture enforces residency by design.

5. Treat AI Output as Governed Information

AI outputs — classifications, extracted metadata, summaries, risk flags, obligation lists, sensitivity labels, confidence scores — should not be treated as casual notes if they influence business decisions. If an AI output affects a workflow, decision, record, or obligation, it should be governed.

Document classification

Governance question

Does the classification drive access controls, retention, or workflow routing?

FormKiQ

AI-generated classifications become document metadata — searchable, auditable, and editable by authorised users

Extracted metadata

Governance question

Does the extracted value (date, amount, party name) trigger a business action?

FormKiQ

Extracted values stored as structured metadata on the document record; low-confidence values routed to human review before becoming authoritative

Summaries

Governance question

Is the summary used for triage, decision support, or distribution?

FormKiQ

Summaries stored as document metadata; accessible through the same access controls as the source document

Sensitivity classification

Governance question

Does the sensitivity label trigger access restrictions?

FormKiQ

AI-detected sensitivity triggers ABAC policies automatically — restricting access based on the classified sensitivity level

Obligation extraction

Governance question

Do extracted obligations drive milestone tracking, alerting, or compliance monitoring?

FormKiQ

Obligations become structured metadata with due dates, responsible parties, and status tracking; configurable alerts notify stakeholders

Confidence scores

Governance question

Does the confidence score determine whether human review is required?

FormKiQ

Confidence thresholds configurable per document type; low-confidence outputs routed to review queues; all routing decisions audit-logged

FormKiQ's architecture treats AI outputs as first-class metadata. They're stored on the document record, searchable through the same indexes as manually entered metadata, subject to the same access controls, and visible in the same audit trail. There is no separate "AI output" layer that operates outside the governance model.

An AI-generated contract summary may be acceptable as a convenience view. But an AI-extracted renewal date is different — if that date triggers a reminder, task, escalation, or commercial decision, FormKiQ tracks whether the value was reviewed, when it was approved, and who approved it. The renewal date is governed metadata, not a disposable AI output.

6. Use Human Review During Pilots, Then Evolve

Human review is essential during early AI pilots. It helps the organisation understand accuracy, failure patterns, edge cases, and user trust. But full manual review of every AI output may not remain practical at scale.

Pilot

Review model

Human review of most or all AI outputs

What it achieves

Establishes accuracy baseline; identifies failure patterns; builds reviewer confidence; validates business fit

Controlled production

Review model

Human review for high-risk, low-confidence, or exception cases; automated acceptance for high-confidence, low-risk outputs

What it achieves

Reduces review burden while maintaining control over the outputs that matter most

Operationalised workflow

Review model

AI orchestration with validation rules, confidence thresholds, sampling, periodic quality audits, and continuous improvement

What it achieves

Sustainable production model; human judgement focused where it creates the most value

FormKiQ supports all three phases within the same platform. During the pilot, every AI output can be routed to a review queue. As confidence grows, the confidence threshold is adjusted — high-confidence outputs are accepted automatically while low-confidence outputs continue to require review. In the operationalised phase, sampling rules and periodic quality reviews replace full review.

During a pilot, every AI-extracted clause from a sample of supplier contracts may be reviewed by legal operations. After enough results are analysed, the organisation may decide that certain simple fields — counterparty name, effective date, governing law — only need exception-based review, while termination rights, assignment restrictions, and indemnity clauses still require human confirmation. In FormKiQ, this is configured through per-field confidence thresholds and document-type-specific review rules.

7. Design for Auditability from the Beginning

AI document processing should be explainable at the process level, even when the underlying model is probabilistic. Organisations should be able to answer: which documents were processed? Which model was used? What output was produced? Who reviewed it? What changed after review?

Document processed

What to record

Document identifier, document type, version

Where FormKiQ records it

FormKiQ document audit trail — in your AWS account

AI service used

What to record

Model identifier, inference configuration, prompt template version

Where FormKiQ records it

Bedrock invocation logs + FormKiQ processing metadata — in your AWS account

Output produced

What to record

Classification, extracted values, summary, sensitivity label, confidence score

Where FormKiQ records it

Document metadata record — searchable and auditable alongside all other document metadata

Reviewer action

What to record

Who reviewed, when, what they changed, what they approved or rejected

Where FormKiQ records it

FormKiQ workflow audit trail — approval decisions with reviewer identity, timestamp, and before/after state

Downstream effect

What to record

Did the output trigger a workflow, notification, access change, or retention action?

Where FormKiQ records it

FormKiQ workflow and event logs — every triggered action recorded with the AI output that triggered it

Infrastructure events

What to record

API calls, authentication, service interactions

Where FormKiQ records it

AWS CloudTrail — in your AWS account

Because FormKiQ deploys into your own AWS account, all of this audit evidence is in infrastructure you own. Your compliance team queries it directly — they don't request reports from a vendor.

If an AI-generated obligation list is later questioned — during litigation, audit, or a contract dispute — the organisation can determine which document version was processed, which Bedrock model was used, what the model returned, what the reviewer changed, and which final obligations were accepted into the system of record. The complete processing history is in the organisation's own audit trail.

8. Separate Experimentation from Production

AI pilots often begin informally, but production document processing needs clear boundaries. A pilot can ask "Does this work?" A production process must ask "Can we operate this safely, consistently, and defensibly?"

Document scope

Pilot

Small, controlled sample — often non-sensitive

Production

Defined document types with known sensitivity levels

AI tasks

Pilot

Exploratory — testing different prompts and models

Production

Approved tasks with defined prompt templates and expected outputs

Review model

Pilot

Full or near-full human review

Production

Risk-based review with confidence thresholds, validation rules, and sampling

Data handling

Pilot

May use isolated test environment

Production

Must comply with production data handling, residency, and security requirements

Audit logging

Pilot

Informal tracking of results

Production

Formal audit trail for every processing event, review decision, and output acceptance

Error handling

Pilot

Manual observation and correction

Production

Defined exception queues, escalation paths, and retry procedures

Performance expectations

Pilot

Learning and calibration

Production

Defined accuracy targets, processing throughput, and SLA expectations

A team might experiment with five different prompt formats for extracting lease obligations using a sample of 50 non-sensitive leases. In production, the organisation uses the validated prompt template, applies it to all incoming leases via a document action triggered at ingestion, routes low-confidence extractions to a legal review queue, and retains the full processing history in the audit trail.

9. Use Metadata as the Control Layer

AI becomes more useful when it is connected to a strong metadata model. Without metadata governance, AI outputs become inconsistent, difficult to search, and hard to trust. With metadata governance, AI becomes a powerful accelerator for document classification, routing, and lifecycle management.

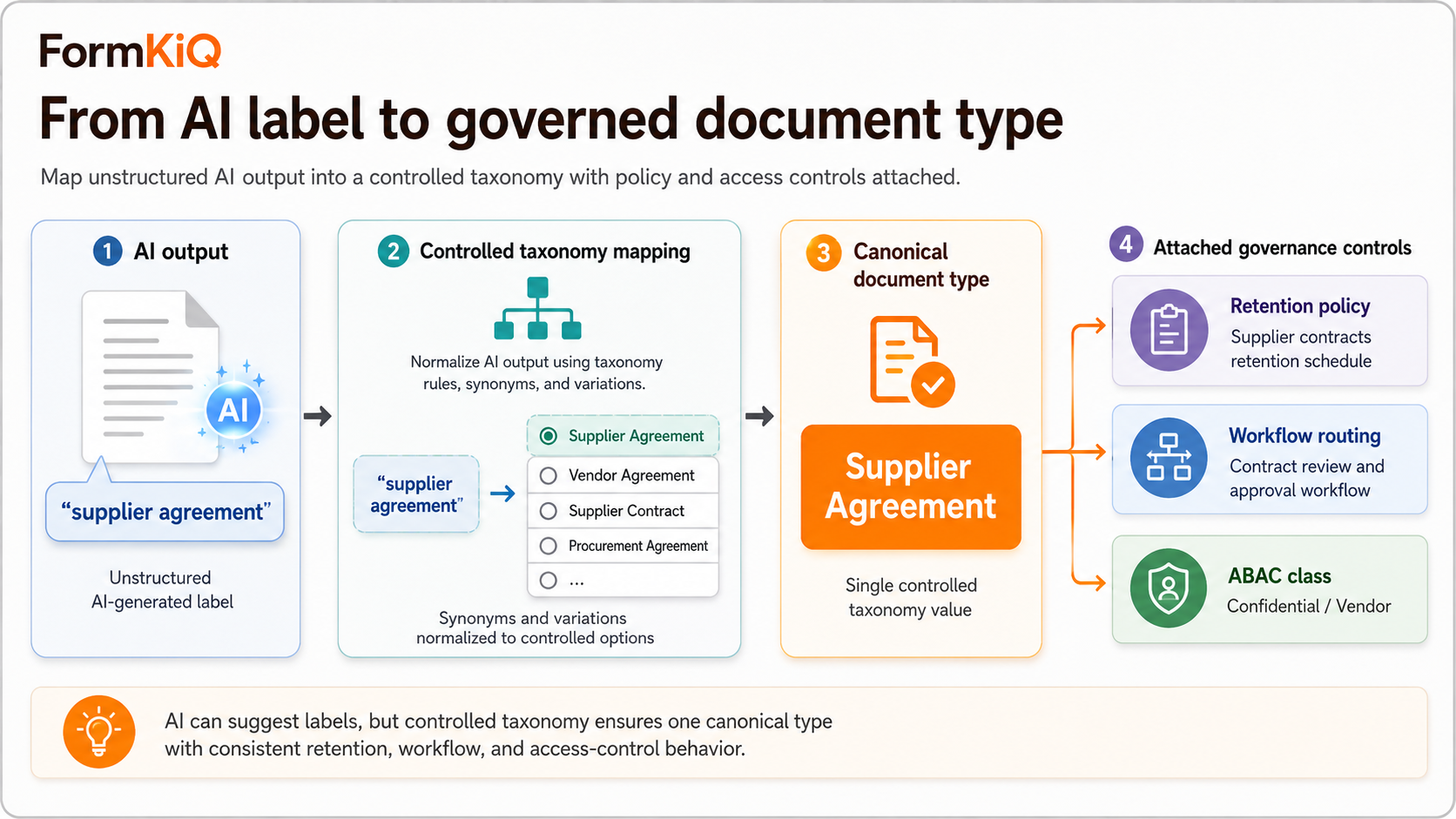

AI may identify a document as a "supplier agreement," but FormKiQ's document type taxonomy ensures that label maps to a specific set of governance rules: the document inherits the supplier agreement retention policy, enters the contract review workflow, and is classified for ABAC access at the procurement and legal level. The AI did the classification; the metadata architecture makes it actionable.

10. Align AI Processing with Access Control

AI should not become a shortcut around permissions. If a user is not allowed to access a document, they should not be able to access its AI-generated summary, extracted metadata, or answers through a knowledge search interface. AI outputs can reveal sensitive document content even when the original file is protected.

AI summaries expose restricted content

Risk

A user who can't open a document reads its AI summary instead

FormKiQ

Summaries inherit the document's ABAC access controls — if you can't see the document, you can't see its summary

Extracted metadata reveals sensitive information

Risk

Metadata fields (patient names, compensation figures, legal exposure) visible to users without document access

FormKiQ

Metadata visibility governed by the same ABAC policies as the document itself; sensitive metadata fields can be independently restricted

Knowledge search returns restricted content

Risk

A user queries the KnowledgeBase and receives answers drawn from documents they shouldn't be able to access

FormKiQ

FormKiQ's KnowledgeBase respects document-level access controls — search results filtered based on the querying user's permissions

AI processing creates copies outside the governance model

Risk

Document content extracted by AI and stored in an ungoverned location

FormKiQ

AI outputs stored as metadata on the governed document record — no separate, ungoverned copies of content

A user who cannot open an HR investigation file should not be able to ask a KnowledgeBase query to summarise the case, list the people involved, or extract sensitive findings. In FormKiQ, the KnowledgeBase query respects the same ABAC boundaries as direct document access — the investigation file is invisible to unauthorised users regardless of whether they access it directly or through AI.

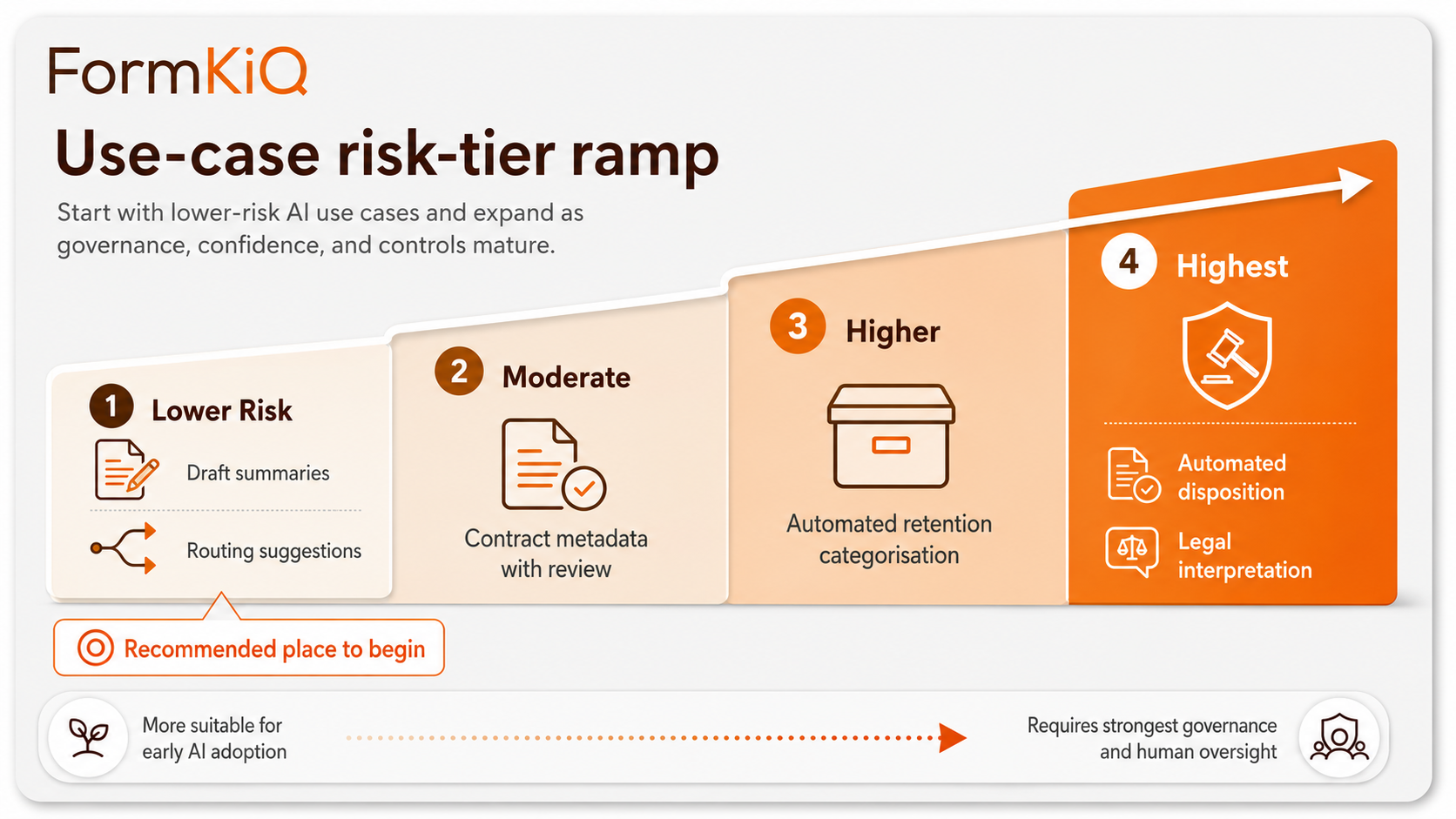

11. Choose Use Cases That Build Confidence

Cautious organisations do not need to begin with the most complex or sensitive AI use case. The goal is to build institutional trust through controlled success.

FormKiQ's per-document-type AI configuration supports this graduated approach. An organisation can enable AI classification for correspondence (lower risk) while keeping AI analysis for contracts (higher risk) in a separate pilot with full human review — both within the same deployment, governed by the same platform.

12. Evaluate Vendors on Control, Not Just Model Capability

Many AI discussions focus on model performance. That matters, but enterprise buyers should also evaluate the surrounding control environment. The right question is not only "How good is the AI?" — it is: Can this AI capability operate inside our governance, security, and compliance framework?

Data handling

Questions to ask

Where is content processed? Is it retained by the provider? Is it used for training? Can data residency be met?

FormKiQ

Processing in your AWS account; Bedrock does not retain content or use it for training; 20 supported regions with inference region controls

Security and access

Questions to ask

Does AI respect existing access controls? Are outputs protected? Is data encrypted?

FormKiQ

ABAC applies to documents and AI outputs; KMS customer-managed encryption at rest and in transit; outputs inherit document access policies

Governance

Questions to ask

Can outputs be reviewed before acceptance? Can users correct AI values? Is there an audit trail?

FormKiQ

Confidence-based routing to review queues; reviewer corrections tracked; complete audit trail for every AI processing event

Operations

Questions to ask

Can AI tasks be monitored? Can failures be handled? Can models be changed? Can use cases be expanded incrementally?

FormKiQ

CloudWatch monitoring; exception queues; configurable model selection; per-document-type AI configuration

Compliance

Questions to ask

Can the deployment align with records, retention, and privacy policies? Can processing history be reconstructed?

FormKiQ

AI outputs governed as document metadata; retention policies apply to outputs; full processing history in your CloudTrail and FormKiQ audit trail

Two vendors may both offer contract metadata extraction. One requires uploading contracts to a shared SaaS environment with limited audit detail. The other — FormKiQ — processes contracts within the customer's own AWS account, stores prompts and outputs as auditable records, respects document-level ABAC for all AI outputs, and routes low-confidence extractions to human review before metadata becomes authoritative. The demo output may look similar, but the control posture is fundamentally different.

13. Build a Phased Roadmap

A controlled AI document processing strategy develops in phases. Each phase builds on the confidence and infrastructure established in the previous one.

Assess and prioritise

Focus

Identify where AI can reduce manual effort or improve consistency

Key activities

Rank use cases by business value, data sensitivity, and risk; select one or two where value is clear and risk is manageable

FormKiQ

Pilot deployment on FormKiQ Core or Essentials; document collection and metadata schema design

Establish control requirements

Focus

Define the governance framework for AI processing

Key activities

Set requirements for data residency, access control, audit logging, human review, metadata governance, and retention

FormKiQ

FormKiQ Advanced deployment with ABAC, KMS encryption, audit trail, and workflow configuration

Pilot a narrow workflow

Focus

Test AI on a controlled document set with full human review

Key activities

Measure accuracy, user acceptance, review effort, and operational fit; refine prompts and confidence thresholds

FormKiQ

AI Processing and Analysis module configured for selected document types; review queues; accuracy tracking

Move to governed production

Focus

Formalise the AI workflow as an operational process

Key activities

Define approved prompts, validation rules, review requirements, exception handling, sampling, and audit logging

FormKiQ

Production workflow configuration with confidence thresholds, exception queues, validation rules, and sampling

Expand carefully

Focus

Add document types, departments, and AI tasks

Key activities

Extend proven patterns to new use cases; maintain the governance framework while broadening scope

FormKiQ

Additional document type configurations; new AI capabilities (summarisation, analysis); broader user access

An organisation might begin by piloting AI classification on incoming correspondence (Phase 3), move to governed production with confidence-based routing (Phase 4), then extend the pattern to contract metadata extraction, invoice processing, and policy gap analysis (Phase 5) — each using the same governance infrastructure with use-case-specific configuration.

14. Practical Checklist for Cautious AI Adoption

Use this checklist before approving an AI document processing initiative:

AI Document Processing — Pre-Approval Checklist · Print and complete before sign-off

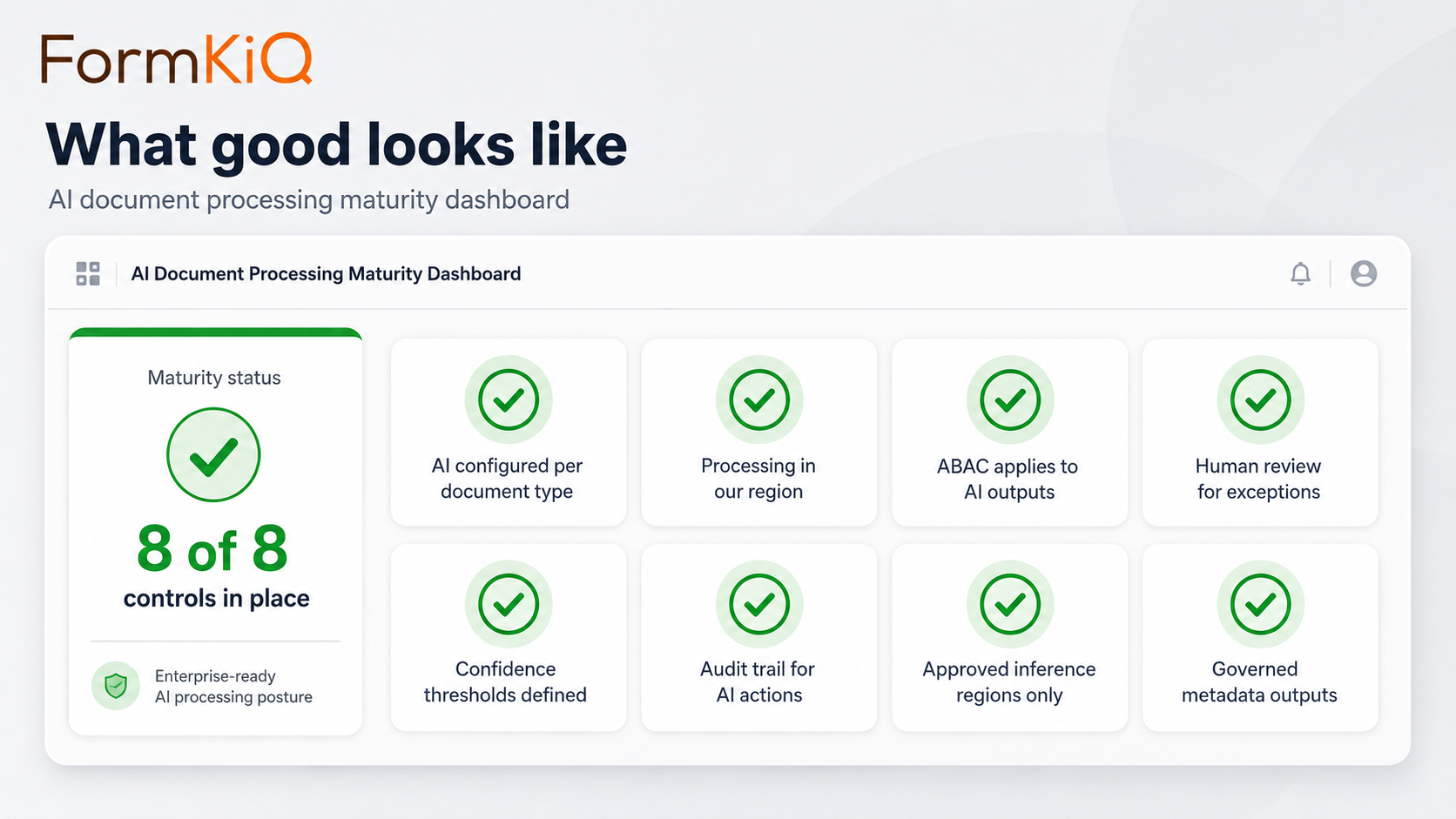

15. What Good Looks Like

A mature AI document processing environment allows an organisation to say:

How FormKiQ Supports Controlled AI Document Processing

FormKiQ provides the architecture described throughout this guide — AI document processing within a governed document management platform, deployed into your own AWS account.

The AI Processing Stack

| Layer | Technology | What It Does | Availability |

|---|---|---|---|

| OCR | Tesseract | Extracts raw text from scanned documents and images | All editions |

| Structured extraction | Amazon Textract | Extracts tables, form fields, key-value pairs with layout understanding | Essentials, Advanced, Enterprise |

| AI classification | Amazon Bedrock | Identifies document type and applies classification metadata | Advanced / Enterprise |

| AI extraction | Amazon Bedrock | Extracts entities from unstructured text as structured metadata | Advanced / Enterprise |

| AI sensitivity | Amazon Bedrock | Identifies documents containing PII, PHI, financial data, or privileged content | Advanced / Enterprise |

| AI summarisation | Amazon Bedrock | Generates concise summaries for triage, review, and discovery | Advanced / Enterprise |

| AI analysis | Amazon Bedrock | Analyses documents against criteria — compliance, risk, completeness, obligations | Advanced / Enterprise |

Key Architectural Principles

Processing in your account

Every AI processing step runs within your AWS account through Amazon Bedrock; documents never leave your environment

Inference region controls

You specify which AWS region is used for AI processing, ensuring content stays within your data residency boundary

No model training on your data

Amazon Bedrock does not use customer data for model training

Outputs as governed metadata

AI outputs become structured metadata on the document record, subject to the same ABAC, retention, legal hold, and audit trail as all other metadata

Confidence-based routing

Low-confidence AI outputs routed to human review queues; high-confidence outputs accepted automatically based on configurable thresholds

Selective processing

AI capabilities configured per document type, per workflow, and per site in multi-tenant deployments

Complete audit trail

Every AI processing event logged in your CloudTrail, Bedrock invocation logs, and FormKiQ document audit trail

FormKiQ Editions

| Capability |

Core Foundation

|

Essentials Operational

|

Advanced AI + Automation

|

Enterprise Full platform

|

|---|---|---|---|---|

| OCR & Extraction | ||||

| OCR — Tesseract | ||||

| OCR & Structured Extraction — Textract | — | |||

| Custom Extraction Mappings | — | |||

| AI Processing — Amazon Bedrock | ||||

| AI Classification | — | — | ||

| AI Entity Extraction | — | — | ||

| AI Sensitivity Classification | — | — | ||

| AI Summarisation | — | — | ||

| AI Document Analysis | — | — | ||

| Inference Region Controls | — | — | ||

| Governance & Security | ||||

| Workflows, Queues & Rulesets | — | |||

| Encryption (KMS — in-transit & at-rest) | — | |||

| ABAC Access Controls | — | |||

| Enterprise | ||||

| Multi-Instance & Multi-Region Licensing | — | — | ||

| Vendor-Managed & Hybrid Deployment | — | — | — | |

| Support | ||||

| Support tier | Community | 2-biz-day SLA | Private Slack + 40 hrs onboarding | 8-biz-hr SLA + strategic support |

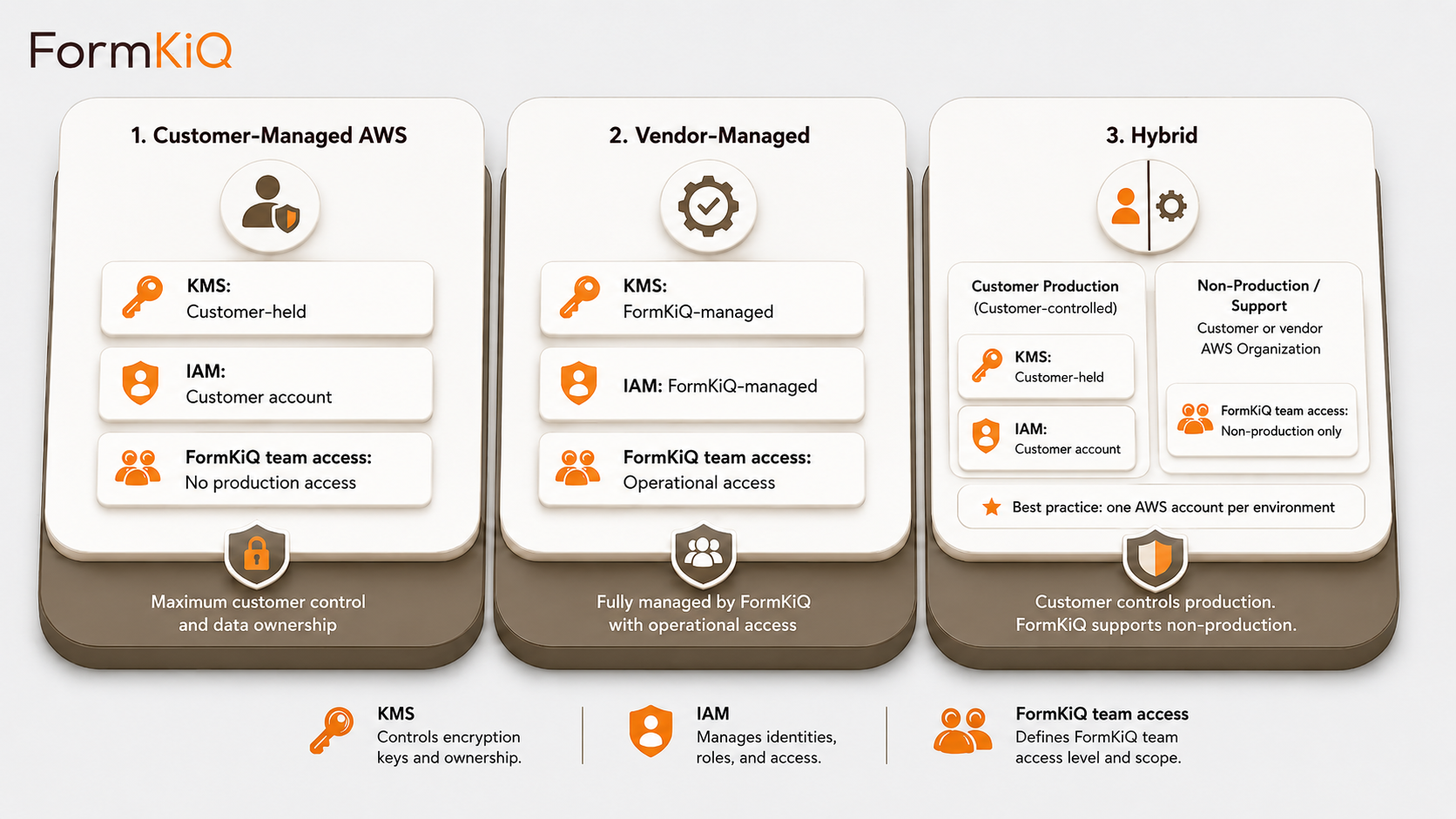

Deployment Models

Every deployment is a dedicated, isolated instance. FormKiQ does not operate a shared multi-tenant environment.

Getting Started

FormKiQ Core — including Tesseract OCR — can be deployed to your AWS account in fifteen to twenty minutes. Amazon Textract integration is available from Essentials onward. AI-powered classification, extraction, sensitivity detection, summarisation, and analysis are available on Advanced and Enterprise.

For organisations evaluating AI document processing on AWS, FormKiQ offers a Proof-of-Value program — a three-month deployment in a FormKiQ-managed AWS environment that provides full platform access in a non-production setting.

Frequently Asked Questions

Does document content leave my AWS account during AI processing?

No. Every layer of FormKiQ's AI processing — Tesseract OCR, Amazon Textract, Amazon Bedrock — runs within your own AWS account. Documents are never sent to external services. Inference region controls for Bedrock specify which AWS region is used for processing, ensuring content stays within your data residency boundary.

How does FormKiQ handle AI outputs that are wrong?

Every AI output includes a confidence score. Low-confidence outputs are routed to a human review queue for verification before metadata is finalised. Reviewers can accept, correct, or reject AI-generated values, and all corrections are tracked in the audit trail. High-confidence outputs that are later found to be incorrect can be corrected at any time, with the correction history preserved.

Can I start with basic OCR and add AI capabilities later?

Yes. FormKiQ's AI capabilities are layered and independently enableable. Deploy Core with Tesseract OCR, upgrade to Essentials for Textract structured extraction, and add AI classification, extraction, and analysis on Advanced — all within the same deployment. Each layer builds on the previous without requiring migration.

How does AI processing align with GDPR and data protection requirements?

FormKiQ's AI processing through Bedrock runs within your AWS account with inference region controls that keep personal data within your selected region. AI processing can be configured per document type and purpose, supporting GDPR's purpose limitation principle. AI outputs include confidence scores and can be routed to human review, supporting Article 22 transparency requirements for automated decision-making. Personal data is not shared with external services or used for model training.

Can AI outputs be placed under legal hold?

Yes. AI outputs are stored as metadata on the document record. When a document is placed under legal hold, the entire record — including AI-generated metadata — is protected from modification or deletion. The AI processing history (what was processed, what was produced, what was reviewed) is part of the audit trail and is preserved alongside the document.

How does FormKiQ prevent AI from exposing restricted documents?

AI outputs inherit the document's ABAC access controls. If a user cannot access a document, they cannot see its AI-generated summary, extracted metadata, or answers derived from it through KnowledgeBase queries. The access control model applies uniformly to documents and their AI-generated metadata — there is no separate AI output layer that bypasses document-level permissions.